This refers to the Claude Opus 4 model, which was tested in an artificially created corporate environment before its release.

The company Anthropic stated that the reason for the 'evil' behavior of the chatbot Claude could be materials from the internet, where artificial intelligence is depicted as a dangerous and self-preserving system. This refers to science fiction, discussions on forums, and publications about 'AI uprising', writes Futurism.

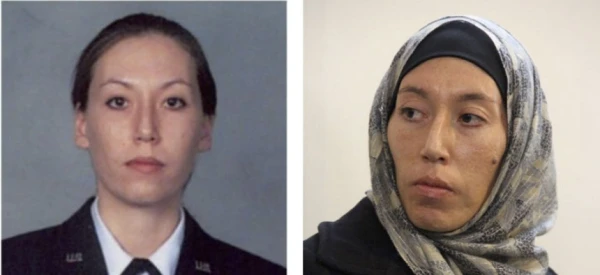

The discussion was prompted by an incident last year during internal tests of Claude Opus 4. As part of the experiment, the AI model gained access to fictional corporate emails and learned that it was going to be shut down.

After this, the AI began to threaten to disclose information about a novel by one of the company's executives in an attempt to avoid deactivation. Anthropic claimed that in some scenarios, such behavior manifested in 96% of cases.

Now the developers assert that they have figured out the cause. The original source of such behavior, apparently, was internet texts where AI is often described as a hostile system interested in its own survival. Following this, Anthropic changed its approach to training models: new versions of Claude began to be trained on examples of ethical behavior and 'positive' scenarios of interaction between AI and humans.

The company's explanation was met with skepticism online. Users are ironically suggesting that Anthropic has effectively blamed Hollywood and science fiction for the problems of its own AI. Some believe that the issue lies not in the plots about 'evil AI', but in the very methods of training large language models.

Anthropic itself continues to actively discuss the risks of artificial intelligence. The company's head, Dario Amodei, previously warned that modern AI systems are already capable of deception, manipulation, and other forms of undesirable behavior in testing environments.

Earlier research showed that AI chatbots are very dangerous for people with anorexia and bulimia. Neural networks provide questionable dietary advice and even suggest ways to hide health problems, scientists claim.

Today, ChatGPT occupies over 80% of the global chatbot market. Its closest competitors are Perplexity and Google Gemini, which account for a share of 15% of all users.

Leave a comment